See how your video hits the brain, before it hits the feed.

No ads. No spam. Just brutally honest feedback on your content.

THE ATTENTION MARKET IS RIGGED

Analytics tell you what happened.

Your brain knows what will.

You only find out if a video flops after you’ve pushed it to your audience, burned budget, and prayed to the algorithm. Watch-time graphs, A/B tests, and CTR dashboards are all backwards-looking. They show you where people dropped, not why they dropped, and definitely not what their brains were feeling in the first four seconds when the decision was made.

Cogniview flips this. Upload your video and see how a brain would respond before you hit publish, so you’re not guessing your next hook or thumbnail in the dark.

You find out where they dropped after burning budget.

You see exactly how the brain reacts before you post.

MEET COGNIVIEW

Brain-simulated feedback for every frame of your video.

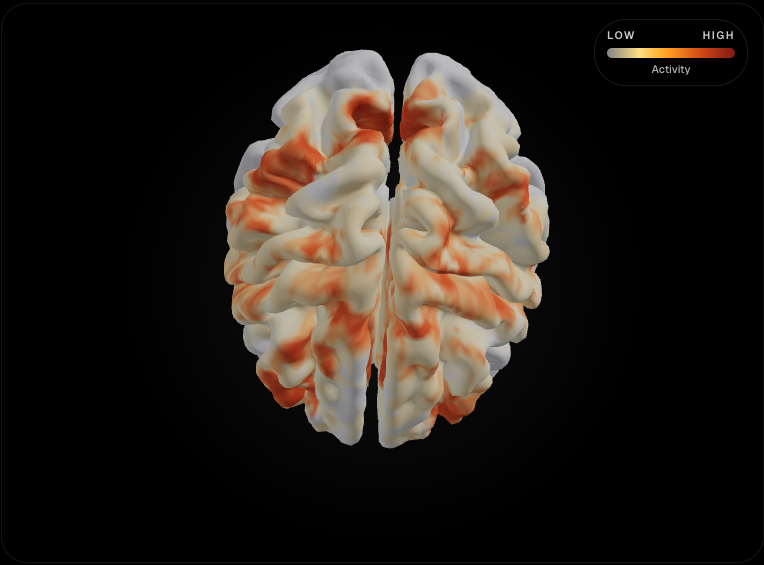

Cogniview is an AI layer built on top of brain-encoding models and multimodal video intelligence that simulate how real human brains light up when they watch your content.

It turns your raw video into a “brainprint” — showing predicted reward, confusion, and drop-off risk across time — and packages that into simple scores you can actually act on.

WHY THIS WORKS

Attention isn’t a vibe.

It’s a measurable brain response.

Neuroscience studies show that group brain activity while people watch videos can forecast how long others will watch those same videos — and even how viral they’ll go online.

Brain signals in reward and threat regions during just the first few seconds of a clip have been linked to higher watch-time and more views per day, outperforming self-reported ratings and traditional engagement metrics.

Cogniview stands on this work: instead of asking people what they “liked,” it simulates the brain’s response to your video and converts that into virality, retention, and emotional-arc scores you can optimize against.

HOW IT WORKS

From raw video to brain-level feedback in four steps.

Upload

Drop your raw video file into the dashboard. No extra setup required.

Understand

Our multimodal AI breaks down the video frame-by-frame, analyzing visual cuts, audio shifts, and narrative pacing.

Simulate

The video is run through our proprietary brain-encoding models to predict cognitive load, emotional lift, and attention drops.

Scores & Edits

You get a second-by-second Retention Arc, an overall Virality Score, and specific timestamps where you need to tighten the edit.

WHAT YOU SEE INSIDE

Everything you wish your

analytics dashboard could tell you.

hook

85

pacing

75

audio

80

visual

80

cta

85

A 17-year-old beat MyFitnessPal.

Now he makes $1.1M a month with a photo of your lunch.

Signal timeline

Attention and audio plotted second by second, with issue markers overlaid on the exact moments that need work.

4 Issues Found

AI Summary

This video starts strong with a compelling hook and generally good visual and audio quality, but early drops in viewer attention and a lack of emotional arousal suggest a missed opportunity to fully engage the audience and drive deeper connection.

Virality Score

A single metric that predicts how likely your video is to trigger the algorithm's reward loop based on early hook strength.

Retention Arc

A second-by-second graph showing exactly where viewer attention peaks and where it drops off.

Hook Analysis

Detailed breakdown of your first 3 seconds. Are you creating curiosity, or just creating noise?

Pacing Heatmap

Visualizes the speed of your cuts and audio shifts. Too fast causes cognitive overload; too slow causes boredom.

A/B Testing Simulator

Upload two different edits or hooks and see which one the brain prefers before you post.

Actionable Edits

Plain-English suggestions on how to fix the video. 'Cut the pause at 0:14', 'Add a visual pattern interrupt at 0:22'.

BUILT FOR PEOPLE WHO LIVE IN THE FEED

If your business depends on

watch-time, this is for you.

Creators & storytellers

Tighten your scripts, nail your hooks, and stop guessing what “the algorithm wants.” Get brain-based feedback before you post, not hate after you post.

Performance marketers & media buyers

Stress-test ad creatives before scaling. Kill weak hooks early, double down on concepts with high predicted retention, and send fewer creatives to expensive A/B tests.

Agencies & studios

Turn creative reviews into a science. Add a Cogniview report to every client video to justify cuts, edits, and creative directions with brain-based evidence instead of “we just feel this is better.”

Product & growth teams

Use brain-predicted engagement to shape product videos, onboarding flows, and in-app education. Optimize for comprehension and retention, not just pretty visuals.

WHY COGNIVIEW, WHY NOW

Every other tool stares at the feed. We stare at the brain.

Most tools obsess over the platform: thumbnails, titles, hashtags, posting times, trending audio. That’s useful, but it’s downstream. The real battle is won in the first seconds, inside a viewer’s head.

Cogniview is one of the first creator tools built directly on brain-encoding research and multimodal models that understand video, audio, and text together — not just transcripts or pixels.

Instead of optimizing your content around a black-box algorithm, you’re optimizing around the thing the algorithm is secretly trying to measure: real human attention.

INSIDE THE DASHBOARD

A control room for your

content’s brain response.

Timeline view

Scrub through your video and watch the retention arc, virality score, and emotional trajectory move with you. Every spike and dip is clickable and annotated.

Moment cards

Auto-generated cards that highlight “magic moments” (high predicted shareability) and “leak points” (where attention crashes), with reasons explained in plain language.

Edit recommendations

Concrete suggestions like “cut 3.2–5.4s,” “swap this B-roll for a face close-up,” or “move the CTA earlier” mapped to your editing timeline.

Experiment planner

Instantly spin out alternate hook ideas or intros based on your script and let Cogniview predict which version will hold more attention.

TRUSTED BY PEOPLE WHO CARE ABOUT EVERY FRAME

From solo creators to performance teams.

Creators, marketers, and growth teams are already using brain-based models in research labs to forecast engagement and virality — now those tools are finally reaching the real world. Cogniview brings that power into a clean interface you can use between drafts, edits, and uploads.

PRICING

Start with a few videos.

Scale with your content calendar.

Start free — no credit card required.

FAQ